We spend quite a lot of science time in primary getting practical, but is this a good use of time? Few of us have as much time in the classroom as we’d like – if we are doing an activity, how do we know it’s an effective use of time?

I’ve recently re-read Abraham and Miller’s influential 2008 paper on the use of practical work in secondary schools and I think it has a lot to tell us about practical work in primary schools too.

Does Practical Work really Work? A study of the effectiveness of practical work as a teaching and learning method in school science. Abrahams and Miller 2008

The paper analyses what it means for a practical activity to be effective with secondary pupils. In this post, I have unpicked it see whether it applies to primary practical science as well. I think it does.

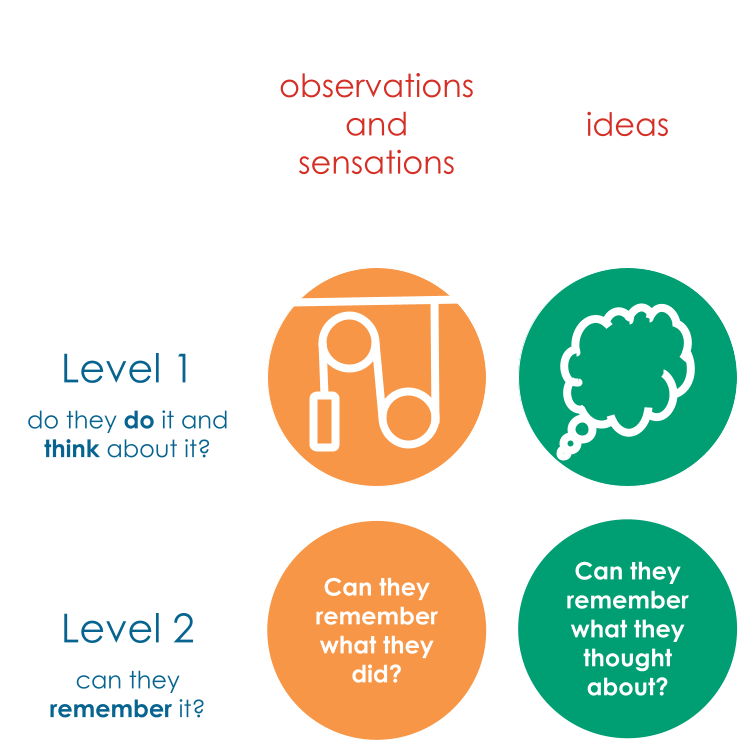

Two Levels of Effectiveness of Practical Work

The authors consider effectiveness in two levels. Level 1 is about carrying out the activity, both physically (do they complete the task successfully) and cognitively (do they think about the right thing?)

If they do either or both of these successfully, there is a chance that the pupils have learnt something, but it isn’t guaranteed. Level 2 asks whether the pupils remember what they did and/or what they thought about?

- Effectiveness Level 1 – did the students do what the teacher intended?

- Did students carry out the practical class as planned? Did they get the anticipated result?

- Did students think about the concepts the teacher intended? Were the concepts too difficult for them? Was there too much to think about at once? Were the pupils distracted by other things?

- Effectiveness Level 2 – did the students learn what the teacher intended?

Is this analysis appropriate for primary science?

The key clarification that I think is needed is what we mean by the word ‘data’ in the observables column. It is helpful to classify types of data and think about when we might use them.

- Continuous data: temperature, mass, length…. things you can measure. We measure the height of a bean; the temperature of different objects; the force needed to pull a toy on different surfaces.

- Ordinal data: tallest to shortest; fastest to slowest; hottest to coldest. This data is often used with younger children. Which magnet is the strongest? Put the beans in order of highest to lowest. Which shadow is the longest?

- Discrete data: how many legs does it have (presumably a whole number!); how many types of bird did you see?

- Categorical data: which group does it belong to? Is it an insect or a spider? Is it man-made or natural? Is it a conductor or an insulator?

These types of data are all used by scientists, but some are simpler for younger pupils to manage. (e.g. ranking the snails from fastest to slowest is more straightforward than measuring their speed).

So, Abrahams and Miller’s analysis works for children from reception to high school. But is there something missing? Do we care about the sensations and physical experiences children have? For example, rubbing balloons on your hair; growing cress; going on a bug hunt. These are observables too, but the data isn’t simple to classify. If we allow a broad definition of data to include sensations, the analysis holds up well. The question of whether the pupils can observe the sensations (level 1) and learn from the sensations (level 2) is relevant for all learners.

Would we expect younger pupils to think about and learn from practical experiences (the domain of ideas)? If we can ask pupils to notice that objects left in the sun are warmer than objects in the shade, we are guiding them to think about their experiences (level 1) and remember them (level 2). Teachers of very young pupils might ask:

- Why do woodlice like to hide under logs?

- Why is it harder to push the buggy on the grass?

- Which material keeps the toy dry?

… and expect a reasonable answer. Therefore, the analysis is still useful for children as young as reception and nursery.

When might you use this analysis?

Lesson planning:

- Learning Intentions: what do you want the pupils to learn?

- Is it ‘observable’? Can they recall at a later time what they experienced?

- Is it conceptual? Can they show understanding of the idea?

- Lesson activities: what do you want them to do? What do you want them to think about? Does the activity support either / both of these?

Checking for Understanding

We know that we should constantly check that pupils that pupils are being successful, but that can be difficult in practical work. The analysis helps by focusing attention on what we mean by success:

- What are the key level 1 things to check during the lesson?

- ‘Observables’ – is the practical activity working? What are the key things that might go wrong? How can I check this quickly and efficiently?

- ‘Ideas’ – what do I want them to be thinking / noticing? How can I check whether they are all thinking the right things? What can I do to prompt them to think about the right things?

Self evaluation and lesson observation

Teachers hopefully ask themselves: how did that go? Typically with practical work, we look at the surface features – did the practical work? This analysis helps focus attention on what we mean by success.

It can be difficult for other colleagues observing the lesson to know what to look for. Superficial markers of success are valid: is it calm? Are pupils busy? Do they know what they are doing? – but this is only part of the picture. It is also important that pupils are thinking about relevant things.

For both self-evaluation and lesson observations, the analysis focusses attention on:

- Level 1 (are they doing the activity successfully? Are the pupils thinking about the right things?) How do you know?

- Level 2 (can the pupils remember key things from a previous practical activity? Can they remember key ideas underpinning a previous practical activity?)

Coaching and CPD

Finally, I think the analysis is a useful tool for coaching teachers improve the efficacy of their science lessons. The coach can ask questions about level one and level two and about doing and thinking and generate a productive conversation about practical work.

I hope you find it useful too.

Ben